How I'm Teaching About Generative AI

Every choice I make about how to discuss GenAI with my students is predicated on trusting them

The Important Work is a space for instructors (and occasionally students) at all levels—high school, college, and beyond—to share reflections about teaching writing in the era of generative AI.

This week’s post is by Cate Denial, and it originally appeared on her blog. Cate is the Bright Distinguished Professor of American History and Director of the Bright Institute at Knox College in Galesburg, Illinois. Cate is a pedagogical consultant who works with individuals, departments, and institutions in Australia, Canada, Ireland, the U.K. and the U.S. Cate’s book, A Pedagogy of Kindness, argues that instructors and institutions of higher education must urgently focus on compassion in the classroom. You can read more of Cate’s work on her blog and find her on Bluesky.

If you’re interested in sharing a reflection for The Important Work, you can find information here.—Jane Rosenzweig

We’ve been living with GenAI for some time now, and there are as many ways to approach its use (or not) in the classroom as there are instructors grappling with its existence. In this post, I sketch out how I’ve chosen to work with undergraduate students in my history seminars on issues surrounding GenAI: by focusing on their AI literacy, and on having them reach a real liberal arts understanding of the issues surrounding this tech.

Some caveats: my policies and practices are shaped by the particular institution for which I work. I rarely teach more than twenty-five students in any given class. I rarely lecture. I have ample opportunity to check in one-on-one with students as they work. The particular way I scaffold our conversations about GenAI reflects this. Some parts are easily scalable (such as the phone game) and some are less so.

(I also do not believe my way of approaching things is the only way to do so. There are a range of ways to approach the impact of GenAI on higher ed—this is just one of many.)

So let’s begin.

I have a clear policy in my syllabus about both laptop and GenAI use:

I’ve chosen to continue to allow students to use laptops, tablets, and phones in class, as I have not yet found an analog approach to assessment (in particular) that I find fully inclusive of disabled students who lack the documentation to qualify for formal accommodations. (If anyone has solved this particular puzzle, I am all ears!) I do, however, ban GenAI use in my classes. I try to explain both policies in my syllabus with the pedagogical choices I’m making front and center. I do not, for example, lead with “We will not use generative AI in this class,” but rather explain why that’s the case before getting down to details. I always have my students annotate the syllabus (thank you, Remi Kalir!), which precipitates a conversation about their thoughts, concerns, and questions related to anything in the document. This is, then, an invitation a conversation about how the class functions, and is often a place to begin a discussion about GenAI.

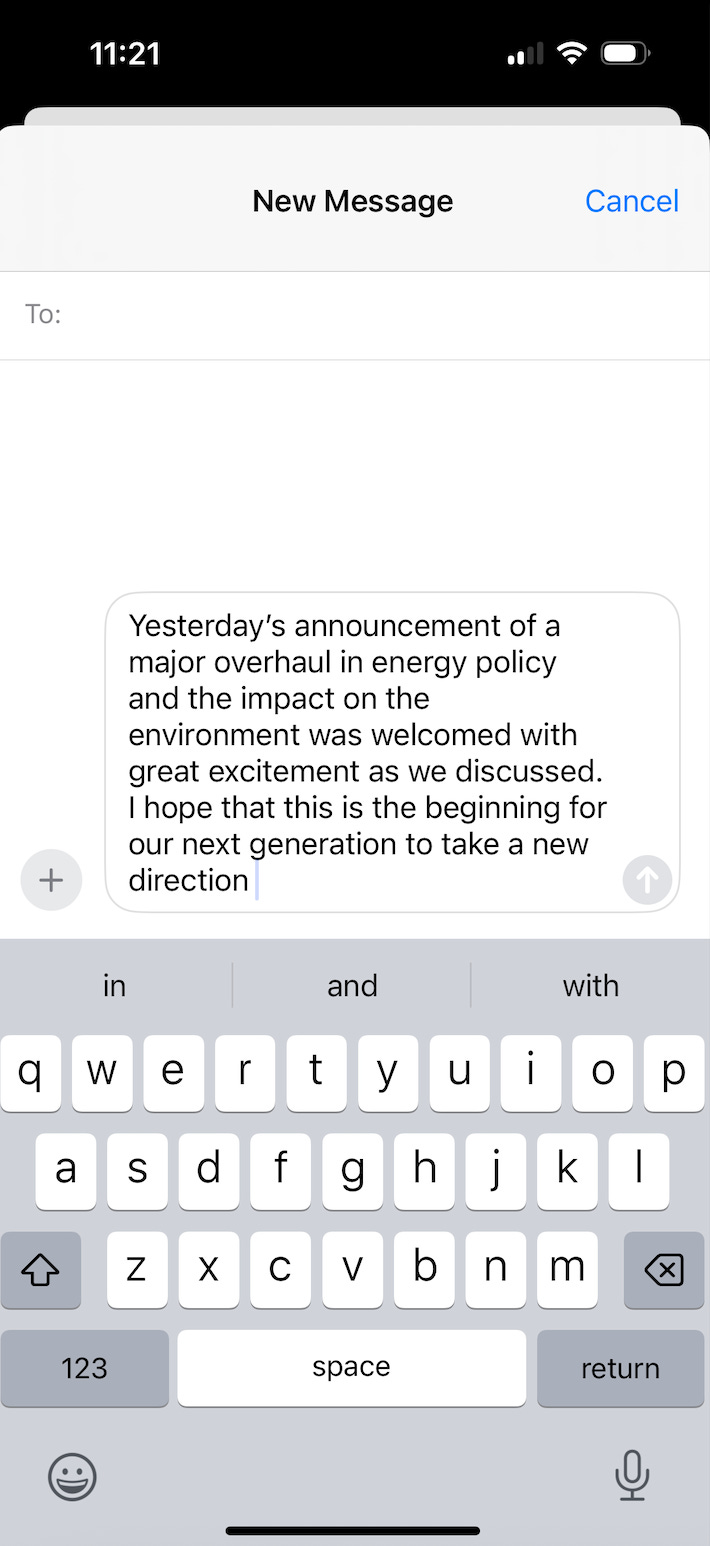

On the second or third day of class (depending on the schedule) I dedicate an entire class period to wrestling with GenAI. We begin class that day with a game. I ask everyone to take out their phone, pull up their texting app, and write the history of yesterday using only the predictive text options their app supplies. Here’s a screenshot of what that looked like on my phone today:

You can see the predictive text options my phone offers below the message I’ve written: it suggests ‘in’ ‘and’ and ‘with’ as words I might use next. These predictive text options are highly individualized, as our phones draw on past use to determine what we’re statistically likely to talk about most. (Apparently I’m very interested in environmental policies at the moment!) This means when students read their histories of yesterday aloud they are unique and often completely wild stories that may or may not have anything to do with how they spent the day before. (I did not spend yesterday concerned with energy policies, for example, although I did get a text to say my budget-billing payment for utilities was going up.)

The game provides an opening to discuss how GenAI models work. GenAI draws on all the data it has collected to statistically predict which words might follow other words and build competent-sounding sentences. GenAI products do not think; they do not feel; they do not lie. They simply predict that in X instances of Y words occurring in Z order, it is highly probable that A will be the next word in a phrase. My students and I talk about the enormous amount of data that these models work from (because GenAI companies stole copyrighted works to build that corpus), meaning that—more reliably than our cell phones do—the sentences and paragraphs that GenAI builds are well-matched to our prompts, and often sound good.

To emphasize the lessons tied up in this game, I assign Mark Reidl’s “A Very Gentle Introduction to Large Language Models Without the Hype” from 2023. In much greater detail than I can hope to delve into in a single class, Reidl walks through how LLMs work (complete with handy graphics), and his explanations always prove to be something of a surprise to students who have assumed that GenAI models have agency, when they don’t.

Here’s where students might be forgiven for saying, “so what?” And that’s why I assign Carl Hendrik’s “Ultra-Processed Minds: The End of Deep Reading and What It Costs Us,” from 2025. This essay is the most marvelous prompt to have a meaningful conversation about the many difficulties we’ve all faced in the last several years, and the impact that world events can have on our practices of reading and thinking. I’m no exception to this—my own reading is not as steady as it once was; I struggle with my concentration. It is a relief to students to understand that they are not singularly at fault for struggling; that this is the experience of thousands of other people. And it offers an overture to think about why we write, why we read, how we think, and what we give up when we try to take a shortcut through that process.

From here my students and I grapple with the ethics of GenAI use. I pick several articles from my Against Generative AI blog post, allowing students to consider environmental concerns, labor exploitation (particularly in Africa), privacy issues, ableism, biases, mental health effects, and the ethics of using something entirely built upon stolen work. Students supplement these readings with what they already know or have experienced about GenAI, or news stories they’re aware of that offer a more positive take on LLMs. Our conversations are robust, but in every iteration of this conversation I have had with students in the last two years, there is one constant: almost no one knows about the ethical issues surrounding GenAI before they delve into the readings I’ve assigned. (We talk about why that might be the case, too.)

Lastly, we talk about disinformation—a bigger issue than GenAI alone, but one that history students have to be particularly aware of. We discuss the tells that marked an image as fake early in AI’s lifetime, and how both more sophisticated programming and individual effort can circumvent many of these tells nowadays. (Still, it’s always good practice to look for six-fingered hands.) We also discuss the rate at which GenAI will statistically predict a citation that looks flawless, but is in fact only a representation of how citations often appear. It is, in other words, fake, necessitating that students must genuinely read anything they put in a bibliography or footnote, and not merely pad either one. (We also talk about how to identify articles that are based on erroneous, even AI-generated evidence, a skill it’s essential any historian have.)

It’s a lot to get through in one class period; sometimes this might spill into two. It’s worth it to me to spend this time with GenAI—to have deep conversations about what it can do and what it cannot—since it greatly lowers the chance that a student will use GenAI down the road.

Every choice I make about how to discuss GenAI with my students is predicated on trusting them: trusting them to be partners in figuring all of this out; trusting them to take the project seriously and try out multiple lines of thought; trusting them to respect the ban on GenAI in my class. If someone uses GenAI anyway, I can deal with that when it happens. I would rather deal with outliers as and when they occur than approach every student with suspicion from the outset.

No single approach is perfect, and I will refine this way of dealing with GenAI for years to come, I’m sure. And as and when GenAI can offer something to student historians that they cannot do under their own steam, I’ll consider rethinking my position. But I do relish getting to enter into wide-ranging conversations with students about our shared identity as historians, and that, perhaps, is the best part of all of this work.

I love the predictive-text exercise and the readings! I am wondering what you do do when you encounter student writing that appears to be AI generated. I’ve found that the student discussions you outline do not eliminate AI use.

thanks for this! I really like your approach, and I think I'm going to snag a couple of these readings for my own courses. :)